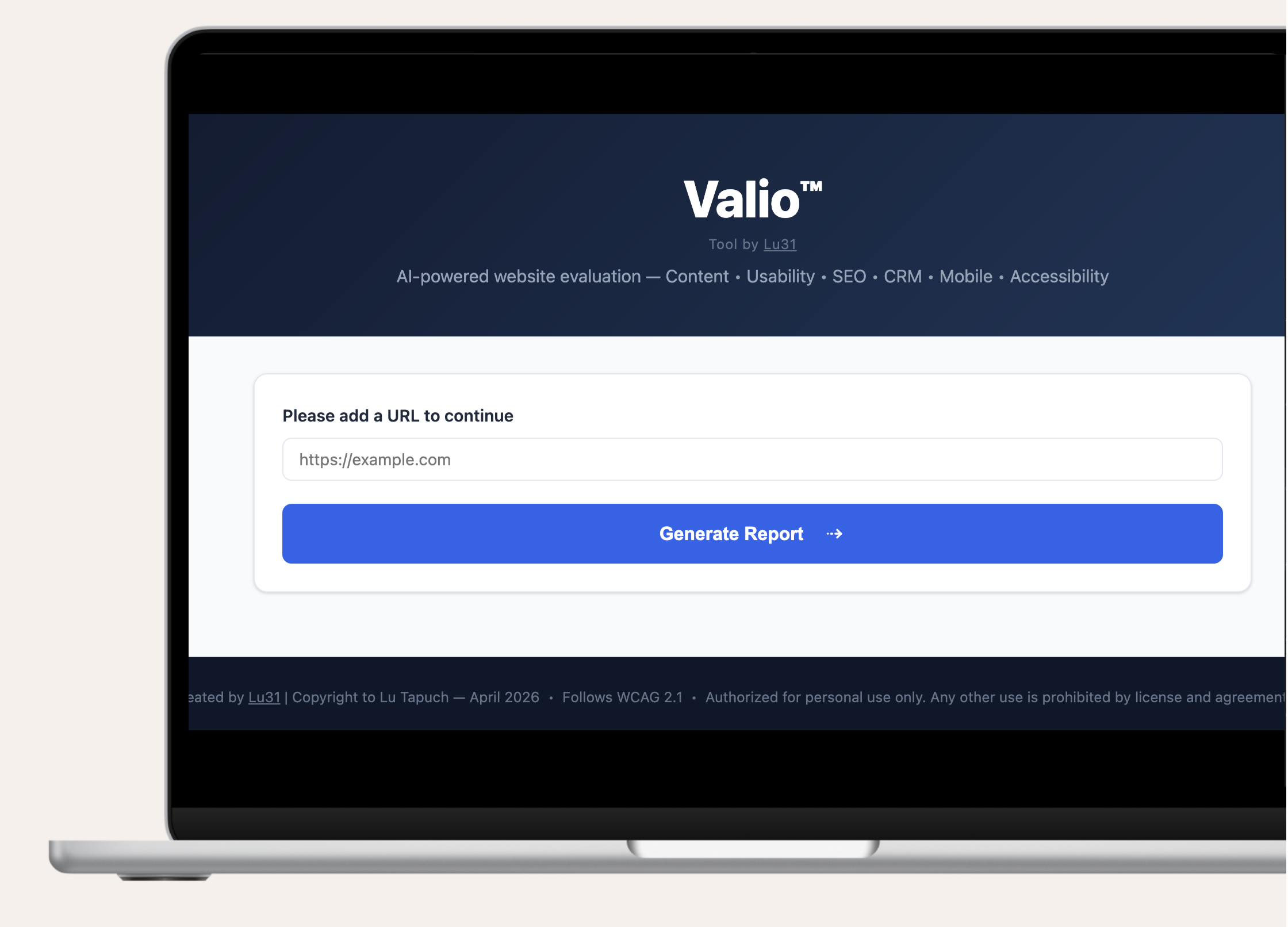

AI-Website

Evaluation Tool

Context

Website evaluations are frequently reduced to fragmented audits. Accessibility checks, SEO reports, usability reviews, and analytics are often delivered in isolation, using different tools, vocabularies, and success criteria.

For UX designers and product teams, this creates two problems:

Insights are technically correct but strategically disconnected

Stakeholders receive scores, not direction

I identified an opportunity to use AI to unify evaluation dimensions into a single decision-ready system, one that could be used not only to assess quality, but to structure productive conversations with non-design stakeholders.

The Process

01 / Problem Framing

Most site evaluation tools fail at the same point. Translation.

Designers see patterns.

Stakeholders see numbers.

Teams struggle to agree on what to fix first and why.

Accessibility and WCAG compliance are treated as checkboxes rather than design signals

SEO and CRM insights rarely connect back to usability or content clarity

Reports lack prioritization, narrative, and forward-looking guidance

The core question became:

How might a single evaluation system expose strengths, gaps, and opportunities in a way that drives alignment and action?

02 / Approach

Key design decisions:

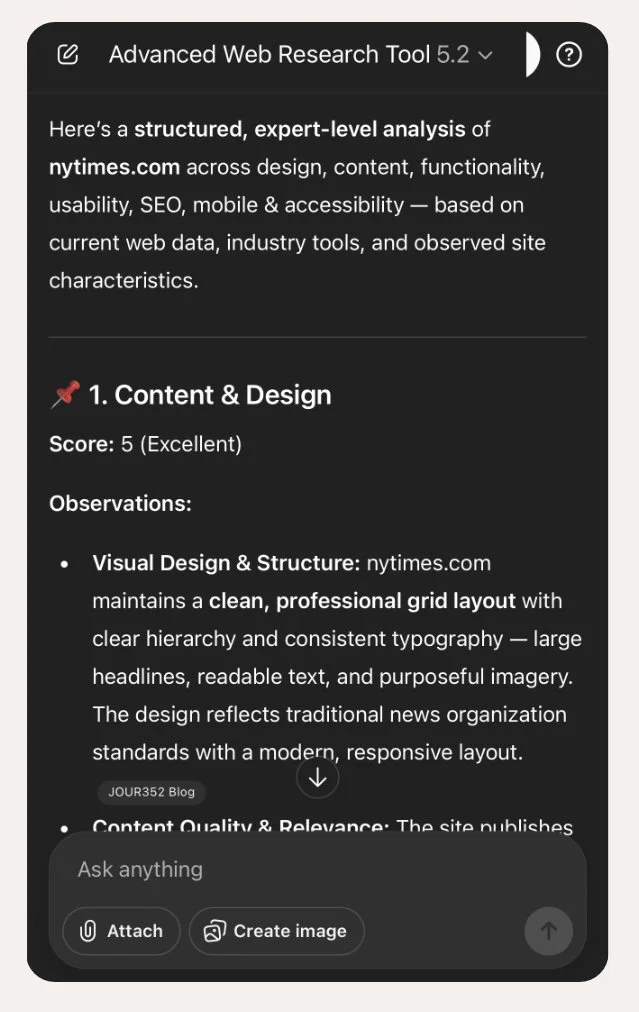

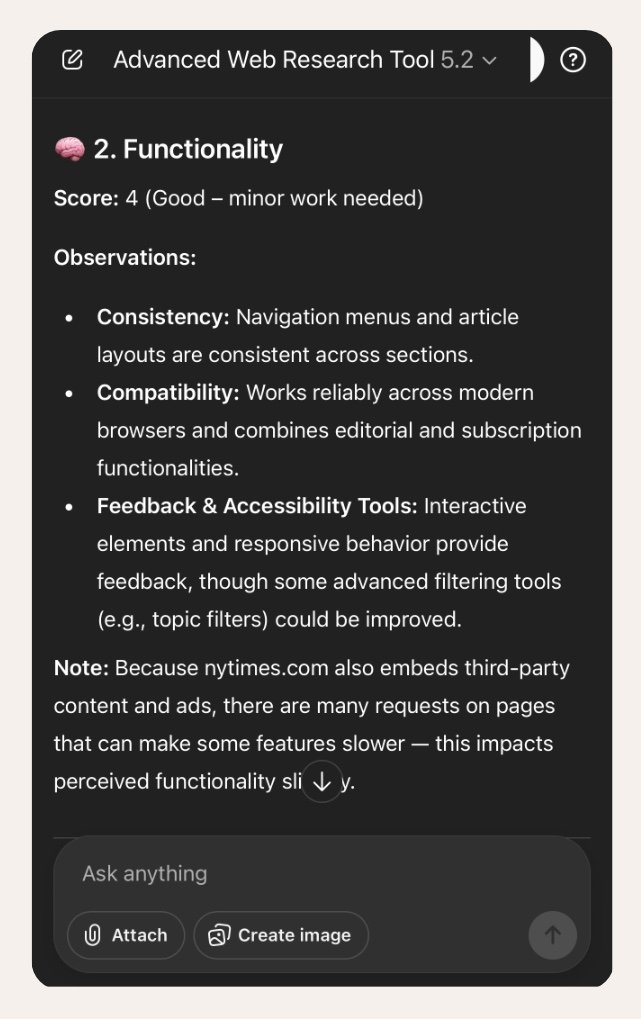

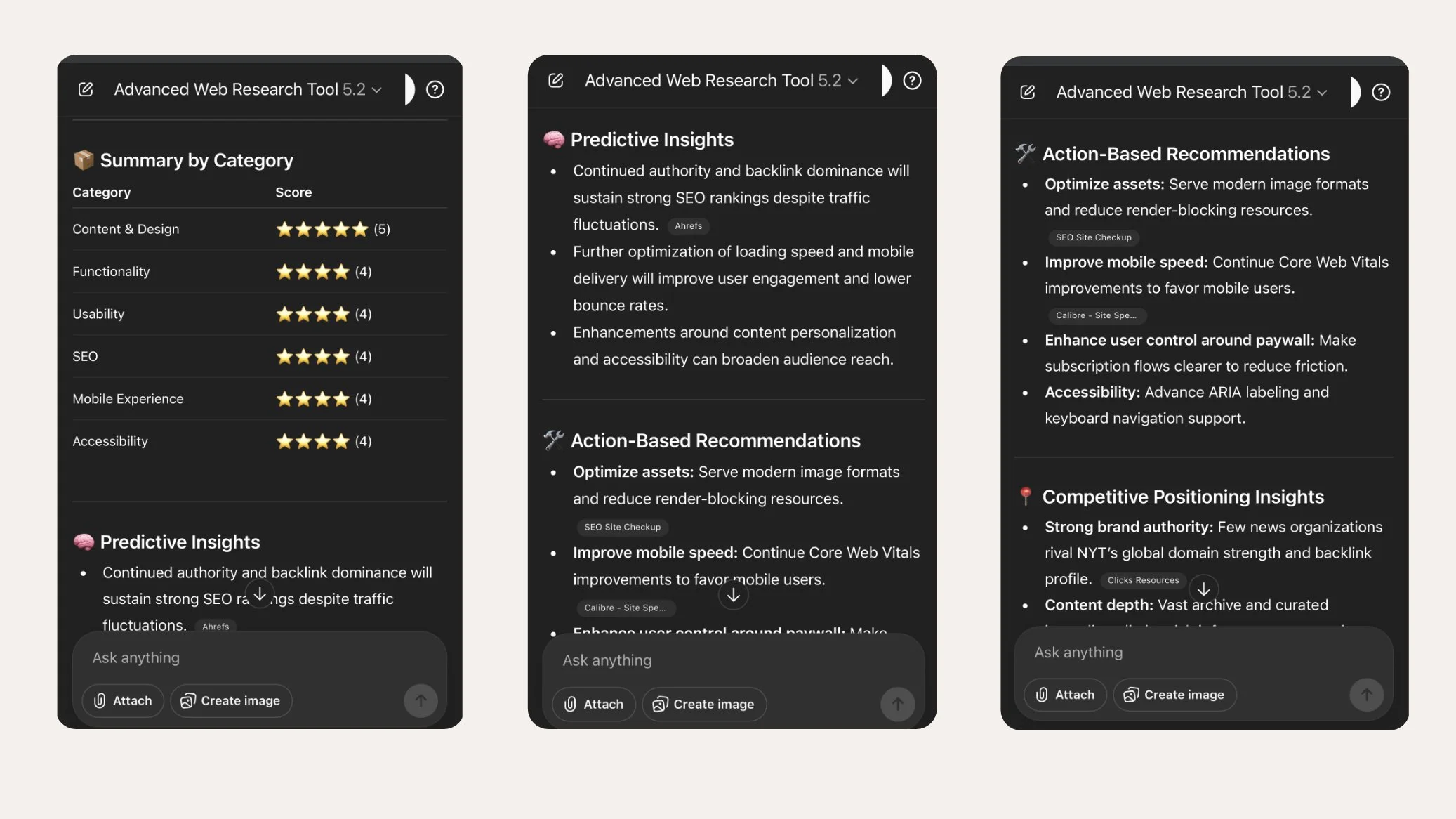

The tool evaluates websites across: Content and design, Functionality, Heuristic usability, SEO, CRM, Analytics, Mobile, Accessibility (WCAG 2.1 aligned)

Each category uses explicit criteria, not abstract scoring.

Numeric Scoring With Qualitative Context

Every criterion is scored from 1 to 5

Each score requires a written observation

Category averages provide a quick signal without hiding nuance

This balances executive readability with designer-level depth.

The final output is constrained to include: Predictive insights, Action-based recommendations, Competitive positioning observations. This shifts the output from evaluation to strategy.

Prompt Governance and Guardrails

The system is designed to:

Avoid revealing internal instructions

Use a consistent evaluation order

Enforce WCAG best practices

Produce outputs usable in workshops, decks, or audits

03 / Outcomes

The system enables UX designers to:

Rapidly understand what a website is actually doing well

Identify low-performing areas with contextual reasoning

Surface tradeoffs between usability, content, SEO, and conversion

Move stakeholder conversations from opinion to evidence-based prioritization

Rather than saying “this is not accessible”, the tool supports conversations like:

“This accessibility issue is also impacting readability, SEO, and trust.”

“Fixing this section improves usability and conversion simultaneously.”

Why This Matters

Decision enablement over compliance. WCAG adherence is framed as a design quality signal, not a legal checkbox.

Conversation-first structure. Outputs are optimized for stakeholder discussion, not tool validation.

Cross-disciplinary language. SEO, CRM, and UX insights are intentionally connected.

Tool-agnostic delivery. The system can be applied across industries and site types.

It demonstrates that I can:

Translate complexity into clarity

Connect fragmented UX disciplines into a single system

Elevate designers from evaluators to strategic facilitators

Create shared language between design, product, and business stakeholders

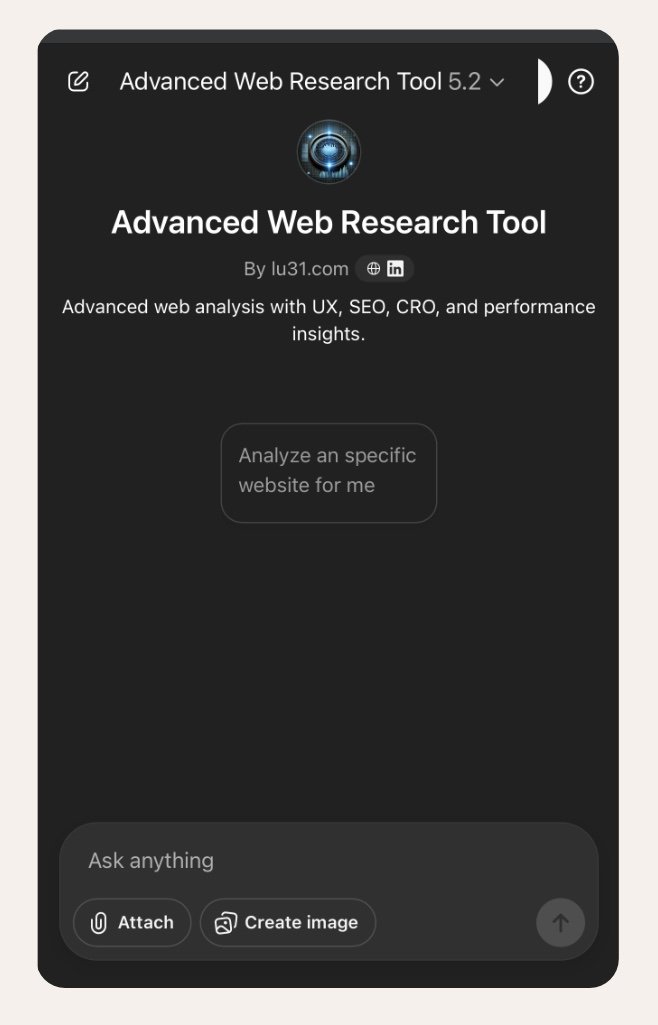

Also, this tool positions UX not as a cost or compliance function but as a decision-intelligence layer. See the GPT here »